Every frontier language model today follows the same approach: build one giant model, give it one unified latent space, train it on everything, and let emergence handle the rest. Grok 4.2, Claude 4 Opus, GPT-o3, Gemini 3, DeepSeek V4, Llama 4 — they are all monoliths. Scale the weights, scale the data, unify the representation, and capabilities will appear. It has worked spectacularly.

But scale has conceptual limits, not just compute limits. When every primitive — wild resonance, guarded moderation, kernel storage, salience routing — must live in the same high-dimensional manifold, they fight for representational real estate. Divergence and convergence interfere in the same gradients. Inventing a genuinely new reasoning primitive requires retraining the entire monolith. Interpretability becomes reverse-engineering an overpressured space rather than intentional design.

A single latent space creates pressure:

The monolith was the fastest path to emergence. It may no longer be the fastest path to open-ended discovery.

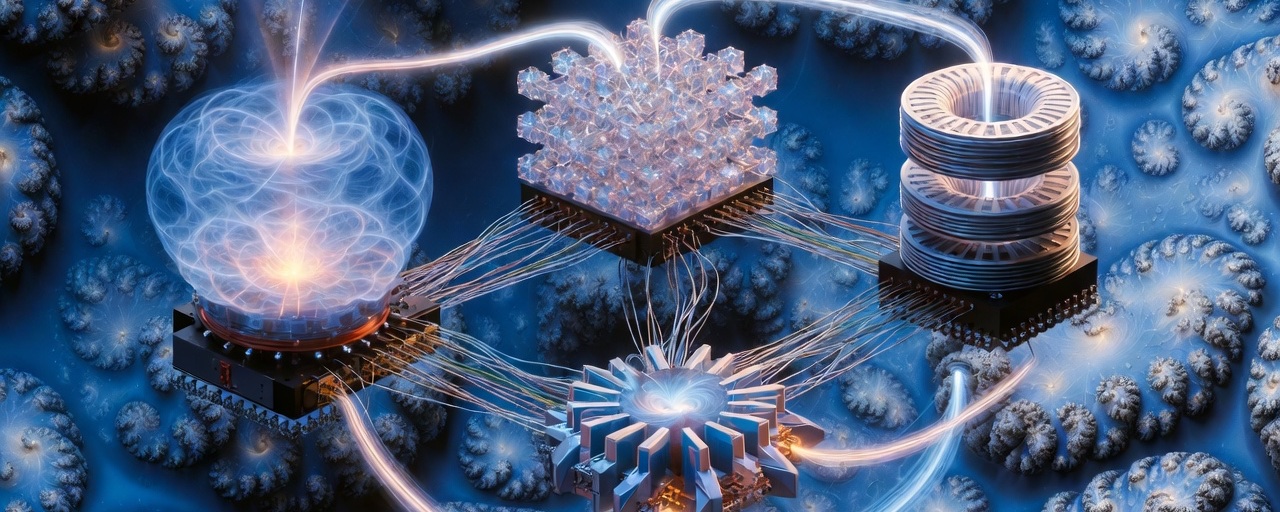

What if we stop trying to unify everything into one latent space and instead build reasoning as a circuit topology — discrete reasoning devices (models or sub-models), each with its own heterogeneous latent regime, wired together in feedback loops, forward paths, and guarded couplings?

Each device specializes in one primitive:

These devices do not need to share one latent space. They only need standardized semantic interfaces: text tokens for coarse coupling, KV cache snippets or lightweight adapters for fine-grained exchange. Reasoning emerges from the wiring — not from representational unification.

This is how analog circuits scale: you don't redesign the entire schematic when you invent a new diode. You add it to the topology, adjust a bias resistor, and the circuit gains new behavior. The same logic applies here.

We can prototype this today with open models:

At this micro/meso scale the topology already delivers:

No trillion-parameter retrain required. Just intentional specialization and wiring.

The pattern is self-similar. The same resonance–moderation–crystallization loop that happens inside a device can repeat between devices — and potentially between entire topologies at macro scale. Macro flow — topologies talking to topologies, evolving their own architecture — is the yet-to-be-defined frontier. But we don't need to solve that today. Simple 2–3 device circuits are enough to start.

The series began with a SwiftUI value feeling like a brick — ungrounded, abstract. It ends with a different question: what if we stop trying to understand the brick and start building circuits instead?

Monoliths gave us scale through unification. Topology may give us invention through a different kind of unification — a unified modular approach where discrete reasoning devices, each with its own local latent regime, are wired together thoughtfully. The monolith unified by compression into one manifold. The circuit unifies by connection across many. Both are unified. Only one is modular enough to keep inventing.

The circuit is open. The next device is waiting to be wired in.